Companies Are Limiting Powerful AI Tools to Prevent Misuse and Global Cyber Risks

Introduction

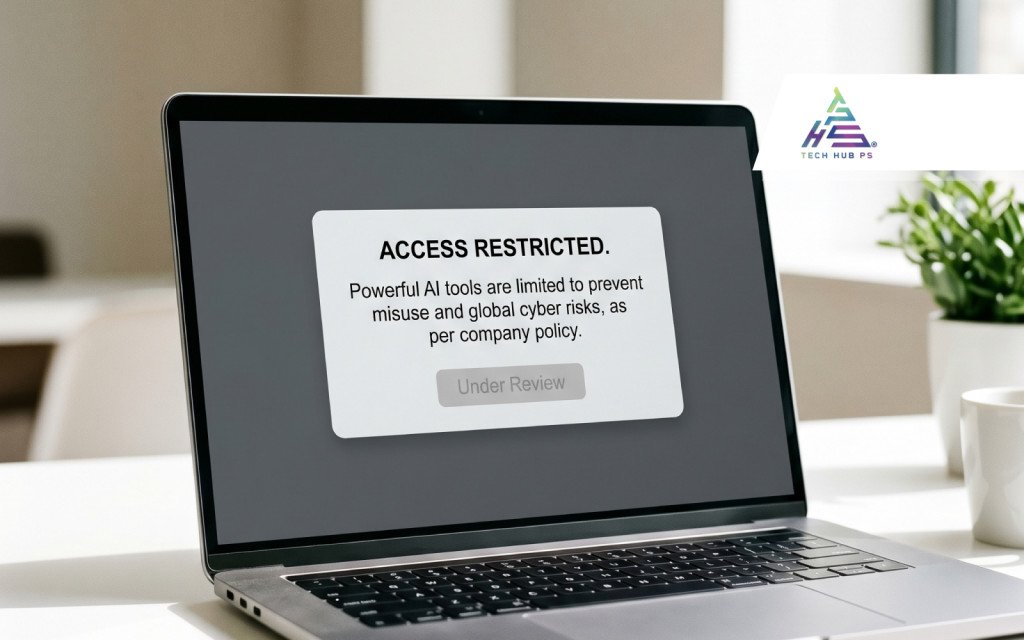

In a significant move to address rising cybersecurity threats, companies worldwide are beginning to impose restrictions on advanced artificial intelligence tools. These measures aim to prevent misuse while safeguarding digital ecosystems from emerging global cyber risks.

Why Companies Are Restricting AI Tools

Organizations are increasingly aware of the dual-use nature of AI technologies. While AI enhances productivity and innovation, it also opens doors to potential misuse, including automated cyberattacks, data manipulation, and unauthorized system access. To mitigate these risks, companies are implementing stricter access controls and monitoring systems.

Rising Concerns Over AI Misuse

Powerful AI tools can be exploited for:

• Generating malicious code

• Automating phishing attacks

• Deepfake creation and misinformation

• Bypassing traditional security systems

New Security Measures Being Implemented

Many organizations are now:

• Limiting access to high-risk AI functionalities

• Introducing approval-based usage systems

• Monitoring AI interactions in real-time

• Applying strict internal policies and compliance frameworks

Impact on Global Cybersecurity

These restrictions are expected to reduce large-scale cyber threats, improve control over AI deployment, encourage responsible AI usage, and strengthen global cybersecurity frameworks. However, they may also slow down innovation and accessibility.

Balancing Innovation and Safety

The challenge for companies lies in maintaining a balance between innovation and security. While restrictions enhance safety, excessive limitations could hinder technological growth and collaboration.

Conclusion

As artificial intelligence continues to evolve, companies are taking proactive steps to ensure it remains a force for good. Restricting access to powerful AI tools may be necessary to protect against misuse and safeguard the global digital landscape.